Anthropic adds dreaming, outcomes, and multi-agent to Claude Managed Agents

Anthropic's new Managed Agents features let AI agents review their own work, self-improve between sessions, and parallelize tasks. Here is what that means for businesses building with AI.

By Springvanta

On May 6, Anthropic shipped three new capabilities for Claude Managed Agents: dreaming, outcomes, and multiagent orchestration. Together they address a problem every business runs into when it moves past demo-stage AI: agents that forget everything between sessions, produce inconsistent output, and choke on work too big for a single context window.

If your business is evaluating AI for customer intake, lead qualification, or voice-based workflows, these features change what "production-ready" looks like for an AI agent.

What Managed Agents actually does

Managed Agents is Anthropic's hosted platform for running AI agents on its infrastructure. You define what the agent should do, give it tools and a sandbox, and Anthropic handles the execution loop, session persistence, and sandboxing. It launched in public beta in April 2026. This week's update adds three features that move it from "run a task" toward "run an operation."

Dreaming: agents that learn between sessions

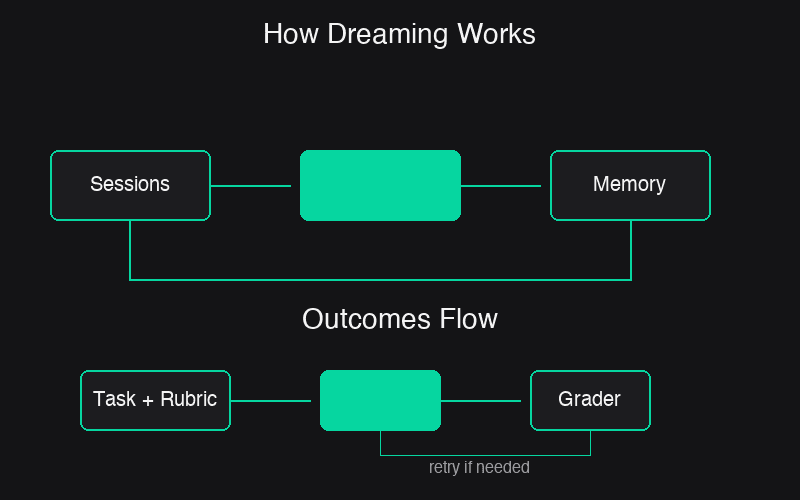

Dreaming is a scheduled background process that reviews past agent sessions, extracts patterns, and writes learnings back to the agent's memory store. The metaphor is deliberate. Like sleep consolidation in humans, dreaming processes what happened during the day and files it in a way that's useful later.

The practical advantage: a single agent running an intake workflow can't see its own recurring mistakes. Dreaming can. It surfaces patterns across sessions, things like which types of inquiries the agent mishandles, which workflows it converges on, and which preferences are consistent across a team. It then restructures memory so it stays useful as it grows.

Memory stores are filesystem-based. When you attach one to a session, it mounts at /mnt/memory/{store-name} inside the agent's sandbox. The agent reads and writes files there with standard file tools. You choose whether dreaming updates memory automatically or queues changes for human review.

For a business running an AI intake agent across hundreds of customer conversations a week, dreaming means the agent gets better at understanding your specific callers without manual prompt adjustments.

Outcomes: a built-in quality gate

Outcomes lets you write a rubric that defines what "done right" looks like. A separate grader agent evaluates the output against your criteria in its own context window, so it can't be influenced by the producing agent's reasoning. If the output falls short, the grader identifies what needs to change and the agent takes another pass.

This matters for tasks where the difference between acceptable and unacceptable is subtle. Think brand voice compliance in marketing copy, completeness checks in legal intake, or data format requirements in CRM data entry. Anthropic's internal benchmarks show outcomes improved task success by up to 10 percentage points over a standard prompting loop, with the biggest gains on the hardest problems.

For businesses evaluating AI tools, outcomes reduces the gap between "the AI can do this" and "the AI can do this reliably enough to trust in production." You define the standard. The system enforces it without a human reviewing every output.

Multiagent orchestration: parallelism with shared context

Multiagent orchestration lets a lead agent decompose a task and assign subtasks to specialist agents. Each specialist gets its own model, prompt, and tools. They work in parallel on a shared filesystem and contribute findings back to the lead agent's context.

Netflix has already deployed this for its platform engineering team. The pattern is familiar in operations: one agent investigates an issue while others fan out through logs, metrics, deploy history, and support tickets.

For a business running an AI receptionist or intake system, multiagent orchestration means a single incoming inquiry can trigger parallel workflows. One specialist qualifies the lead against your criteria. Another enriches the CRM record. A third checks scheduling availability. The lead agent synthesizes all of it into a structured response.

Every step is traceable in the Claude Console. You can see which agent did what, in what order, and why.

Why this matters for business AI adoption

The gap between AI demo and AI in production has always been about reliability, not capability. Anthropic's three features address specific failure modes that businesses encounter:

- Agents that forget between sessions. Memory and dreaming create persistent learning.

- Agents that produce inconsistent output. Outcomes enforces a quality bar without human review on every run.

- Agents that can't handle complex workflows. Multiagent orchestration parallelizes work that exceeds a single context window.

Netflix, Rakuten, Notion, and Wisedocs have disclosed production deployments. Rakuten reported a 97% reduction in first-pass errors, 27% lower cost, and 34% latency improvement on long-running agent tasks after adopting Managed Agents with memory.

For businesses considering AI intake, voice agents, or automated lead qualification, the infrastructure for production-grade AI agent operations just got more complete. The question is no longer whether AI can handle the task. It's whether your data and processes are ready for an agent that improves itself.

Sources

- New in Claude Managed Agents: dreaming, outcomes, and multiagent orchestration (Anthropic, May 6, 2026)

- Anthropic updates Claude Managed Agents with three new features (9to5Mac, May 7, 2026)

- Anthropic will let its managed agents dream (The New Stack, May 7, 2026)